With talks of large companies investing in AI weapons, brain-chips being developed to enhance soldiers, and the head of the British armed forces saying that robot soldiers could make up a quarter of the army by the 2030s, people might have some doubts about where this is all going — a recent survey citing twelve percent of Americans see the possibility of human extinction.

There’s good news though. Terminator-style robots aren’t busting through your door any time soon, according to one AI expert.

That’s because these types of self-aware robots require something called general artificial intelligence and AGI is very far in the future — if we even get there at all.

What we have today is “narrow AI,” which is specialized to do just one specific task.

Narrow AI might appear intelligent, but the illusion disappears once you look under the hood.

“None of these seemingly intelligent pieces of software have any real intelligence in them, and more importantly, we can’t get from here to there,” AI expert Steven Shwartz told MetaStellar. “In other words, we can’t get from the current paradigm of AI into truly intelligent software.”

According to Schwartz a self-aware robot coming to get you might be as likely as somebody inventing a time machine — it’s not happening any time soon, if ever.

In “Evil Robots, Killer Computers, and Other Myths: The Truth About AI and the Future of Humanity, Shwartz explains how AI actually works in simple terms, why it might be a little premature to worry about self-aware robots taking over the world, and what society will have to do to maximize the positive impacts of AI while minimizing the negative effects.

This is a good time for the book to come out.

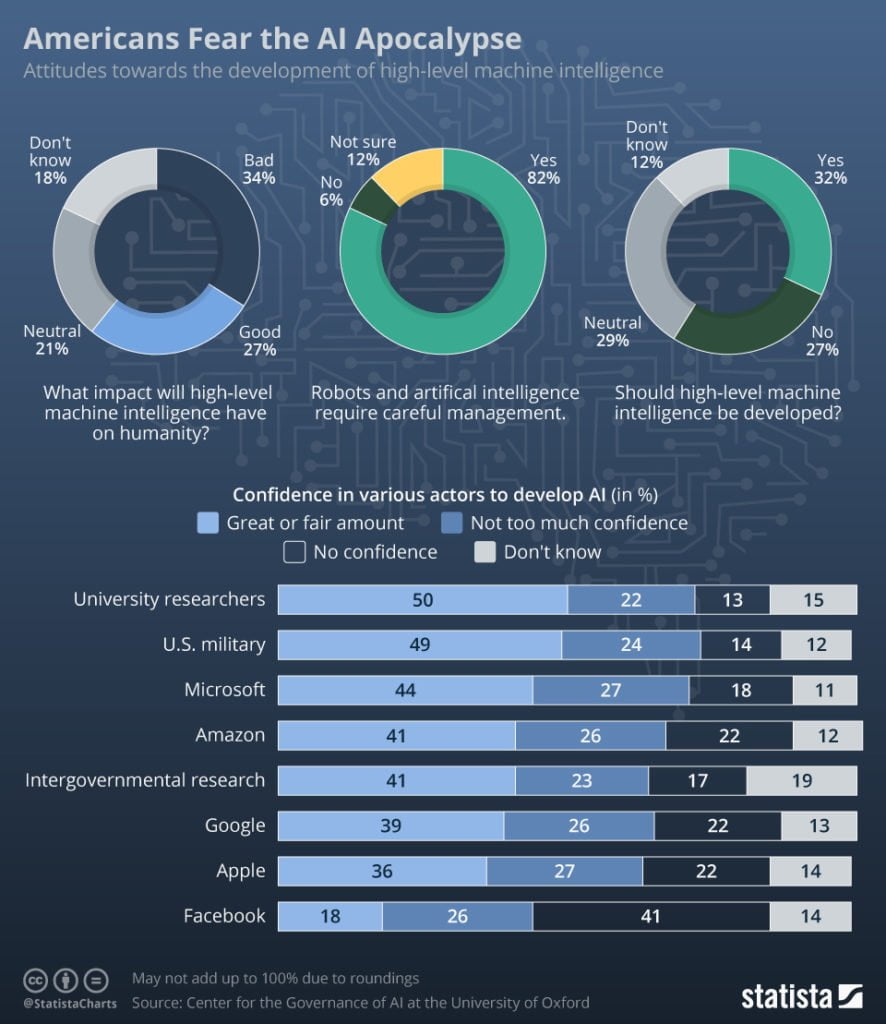

A survey conducted by Oxford University’s Center for the Governance of AI found that many Americans fear a future where AI becomes too intelligent. Thirty-four percent of respondents thought it would have a negative impact. In fact, twelve percent said it would lead to human extinction.

Only twenty-seven percent of respondents believed in a positive outcome.

Shwartz’ upcoming book can be read by someone with no previous knowledge of artificial intelligence, and a little education could help reduce the fear of the unknown that an AI driven future presents.

You can get Evil Robots, Killer Computers, and Other Myths: The Truth About AI and the Future of Humanity, published by Fast Company Press, on February 9, 2021.

Edited by Maria Korolov

MetaStellar news editor Alex Korolov is also a freelance technology writer who covers AI, cybersecurity, and enterprise virtual reality. His stories have also been published at CIO magazine, Network World, Data Center Knowledge, and Hypergrid Business. Find him on Twitter at @KorolovAlex and on LinkedIn at Alex Korolov.