Copyright laws allow for fair use, for copyright work to be used as inspiration, for new and transformative creations based on them. That’s basically how humans work. We see stuff, we read stuff, and that inspires the new stuff we create.

It’s a common misconception that AI art generators cut-and-paste from current work. They don’t. They are trained on images, both public domain and copyrighted, draw inferences about what those images look like and how it relates to their descriptions, then forget all the data they were trained on. When they’re asked to generate a new image, they create one from scratch.

Sometimes, this will have weird results. For example, if some of the training images had watermarks, the AI might think that images are supposed to have things that look like watermarks on them, and it will try to create its own version of a watermark.

Sometimes, when there are a lot of examples of a particular image, the one that the AI creates will be very like the original.

For example, I asked Midjourney to create an image for me based on the prompt “Mona Lisa.” As you can see from the results below, it’s kind of what you would get if you asked me, say, to draw the Mona Lisa from memory, assuming, of course, that I could draw. Which I can’t. The face, the hair, the clothes, the background — all are slightly different from the original. This, even though there must have been quite a few examples of the actual Mona Lisa in Midjourney’s training data set.

So, under today’s common understanding of copyright law, AI image generators are legal because they are transformative.

In 2021’s Supreme Court decision in a lawsuit between Google and Oracle, the court held that using collected data — even data under copyright — to create new works can be considered fair use.

However, there are several other lawsuits working their way through the courts. We might see new case law come out, based on a new interpretation of what it means to be transformative.

I also expect to see pressure on regulators from creators and industry players to pass legislation. Though, for the most part, businesses stand to gain quite a bit from generative AI, so any new laws probably won’t be particularly onerous for the AI companies.

In the most extreme case, generative AI companies will need to have permission from copyright owners before using copyrighted content to train their models. In that case, we will expect to see new versions of the AI models quickly replacing the current ones, trained only on public domain and permissioned text and images.

“We are working on fully licensed datasets plus opt-out mechanisms for future model development that we do and support,” said Stability AI founder and CEO Emad Mostaque in a Reddit AMA late last year. “We will make some announcements about this soon. It should be noted that these models are unlikely to ‘mature’ for the next year so will get upgraded regularly.”

Stability AI makes Stable Diffusion, the most popular open source AI image generator, used behind the scenes by most new image startups.

So we will still have AI models, just with a smaller training data set. Or, at least, a different training data set. And with ongoing improvements in AI technology, we probably won’t see much difference in image quality. The one major change will be that people will no longer be able to ask for images or text to be created in the style of artists and writers whose work was not part of the training data set.

Another possibility is that artists and writers will be compensated if people create art or text in their style. This is what Shutterstock is already doing.

Whatever happens, the technology won’t go away. It’s already out there, in the public domain, in the form of open source software, which anyone can download and use. It is also expected to help drive significant economic growth, so we shouldn’t expect any legislation to be too heavy-handed.

Short-term best practices

Until the courts and regulators decide what to do about AI text and image generation, what should AI users be doing?

My suggestion — and I am not a lawyer, so please do not take this as legal advice — is that writers and artists are most upset when work is created and sold based on their signature styles.

Basically, unscrupulous people are creating deep fakes of those artists’ works and selling them as if they are authentic images. This directly takes income away from artists.

Don’t do this.

It’s also illegal under current laws, because it’s false advertising.

Next, there are people selling images that are clearly labeled as “in the style” of particular artists. This is not currently illegal, and probably won’t become illegal since the entire global fashion industry might well collapse as a result. But it’s not nice.

If I were an artist, I would not be marketing AI-generated art as being in the style of another artist. I don’t think I’d be sued, because you can’t copyright a style, but it would definitely be in very bad taste.

And if I was someone using AI-generated images — a company, for example, using AI images for marketing or training materials, or a book author looking for a new book cover design — I would avoid using the phrase “in the style of” in combination with the names of artists whose works are currently under copyright. Instead, I would use a more general description, such as “black and white photograph,” “comic book illustration,” or “watercolor painting” or the names of artists whose works are now part of the public domain.

Or, I would use AI-generated images from a company like Shutterstock, which compensates artists for the use of their names in image prompts.

Who owns the copyright to AI text and images?

Finally, there’s the question of copyright.

Currently, the images created by generative AI tools cannot be copyrighted. Just like, if you roll a pair of dice, you can’t then copyright the number “26.”

AI-generated images are all currently considered to be public domain. That means that anybody out there can use them for any purpose.

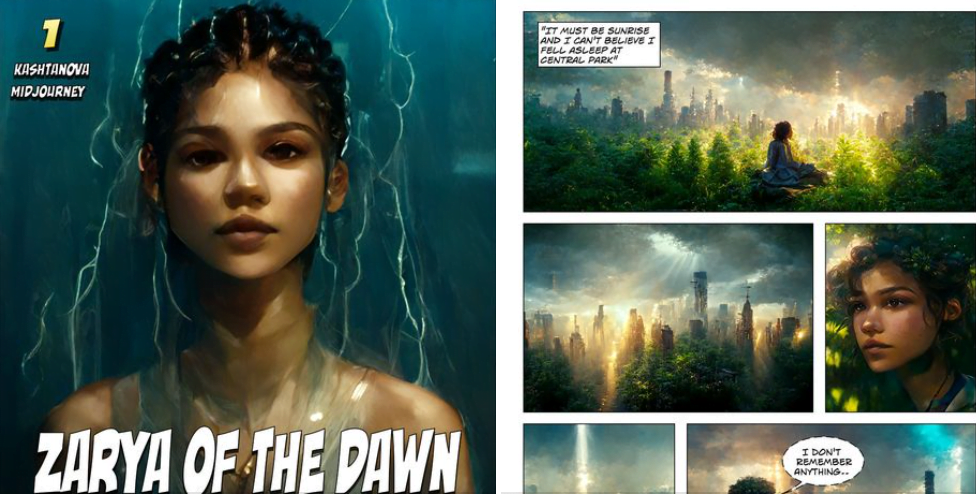

Just last week, the US Copyright Office reaffirmed this policy in its copyright registration for the comic book Zarya of the Dawn.

In his letter describing the decision, Robert J. Kasunic, director of the Office of Registration Policy & Practice, said that the specific images in that comic book, which were created using Midjourney, are not protected by copyright. But the accompanying text, as well as the selection, coordination, and arrangement of the comic’s written and visual elements — all of that is protected.

That means that once you take an AI-generated image and make it a part of a bigger project, such as an Instagram post or a book cover, then the entire thing falls under copyright law. So, not the image itself, but the whole cover — the composition, the layout, the text design, and so on.

So, yes, if you use an AI-generated image, that same image might pop up on someone else’s cover and there’s nothing you can do about it. However, this is similar to the situation where stock images are used to create book covers, since those same stock images can show up elsewhere as well.

But if you read that letter closely, there’s something very interesting buried in there.

Kasunic wrote, “The fact that Midjourney’s specific output cannot be predicted by users makes Midjourney different for copyright purposes than other tools used by artists.”

Does that mean that AI image generators that do allow more user control will fall under copyright? Say, if they support more finely-tuned prompts, pre-set poses, and seed images. Controls that allow artists to predict, and to affect, the results?

ControlNet, for example, which was released earlier this month, allows the use of drawings, scribbles, photographs, and pose examples to be used in the generation of new images.

These advanced AI tools don’t just generate a random image that’s somewhat close to your prompt but instead allow you a great deal of fine-grained control. Will the Copyright Office rethink its policies on AI generated images once the tools get more predictable?

I’d hate to be the person trying to decide how much control is enough to qualify a tool as generating copyrightable content.

And what about AI-generated text?

In the case of the Zarya of the Dawn comic, the author, Kristina Kashtanova, modified some of the images with Photoshop. The Copyright Office said that the modifications weren’t substantial enough to justify copyright.

Now imagine that AI was used to write a short story, or even an entire novel. How much editing of the AI-generated text would you have to do in order to be able to copyright it?

If you do no editing at all, then it seems that the Copyright Office policy is clear — no copyright. Anyone can take your book and sell it under their own name.

And if you use the AI for idea generation, and some outlining and background research, and write the text yourself, then you’re in the clear. But what if you use AI to rewrite your rough draft into cleaner prose? Do you then lose the copyright to your work?

I, for one, will be following the developments in this space with great interest.

And if you submit a story to MetaStellar next month, then I suggest that, for now at least, you use as little AI as possible.

You’d hate to lose the rights to your work just because ChatGPT fixed your grammar for you.

Edited by Melody Friedenthal

MetaStellar editor and publisher Maria Korolov is a science fiction novelist, writing stories set in a future virtual world. And, during the day, she is an award-winning freelance technology journalist who covers artificial intelligence, cybersecurity and enterprise virtual reality. See her Amazon author page here and follow her on Twitter, Facebook, or LinkedIn, and check out her latest videos on the Maria Korolov YouTube channel. Email her at [email protected]. She is also the editor and publisher of Hypergrid Business, one of the top global sites covering virtual reality.